Google DeepMind predicts future of AI: 4 risks that could threaten humanity

DeepMind coined a shocking term and warned about the danger (photo: Getty Images)

DeepMind coined a shocking term and warned about the danger (photo: Getty Images)

Experts from Google DeepMind have presented a detailed technical report on the safe development of Artificial General Intelligence (AGI). According to them, despite the doubts of skeptics, the emergence of AGI may happen in the near future.

Here are the four key scenarios in which such AI could cause harm, according to Ars Technica.

Contents

- What is Google DeepMind

- What is AGI (Artificial General Intelligence)

- Four types of AGI threats

- What will AGI be like in five years

What is Google DeepMind

Google DeepMind is a British company specializing in the development of artificial intelligence (AI), founded in 2010 and later acquired by Google in 2014.

DeepMind specializes in creating AI systems capable of learning and making decisions by mimicking human cognitive processes. The company is known for its achievements in machine learning and neural networks, as well as the creation of algorithms to solve complex problems.

In addition to games, DeepMind is actively working on applying AI in medicine, developing algorithms for diagnosing diseases such as diabetic retinopathy and cancer. In 2016, DeepMind released the AlphaZero system, which demonstrated the ability to learn and win in chess and other games without prior human input.

The company is also developing systems to improve the operation of Google servers, using AI to optimize the energy efficiency of data centers.

The company continues to attract the attention of the global scientific community due to its innovations and its efforts to create more advanced and secure AI technologies.

What is AGI (Artificial General Intelligence)

The so-called Artificial General Intelligence (AGI) is a system that possesses intelligence and abilities comparable to human ones.

If current AI systems are indeed moving towards AGI, humanity must develop new approaches to ensure that such a machine does not become a threat.

Unfortunately, we do not yet have anything as elegant as Isaac Asimov's Three Laws of Robotics. DeepMind researchers are addressing this issue and have published a new technical document explaining how to safely develop AGI.

While many experts consider AGI to be science fiction, the authors of the document suggest that such a system could emerge by 2030. In this regard, the DeepMind team decided to study the potential risks associated with the emergence of synthetic intelligence with human-like traits, which, as the researchers themselves admit, could lead to "serious harm."

Four types of AGI threats

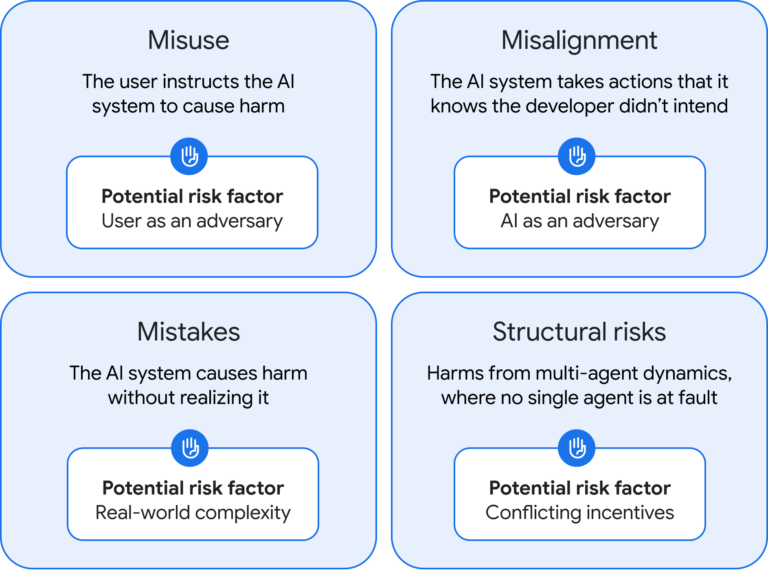

The DeepMind team, led by one of the company's founders, Shane Legg, has identified four categories of potential threats related to AGI: misuse, misalignment, mistakes, and structural risks.

Misuse

The first threat is misuse. Essentially, it is similar to the risks associated with current AI systems. However, AGI, by definition, will be significantly more powerful, and therefore, the potential harm will be much greater. For example, a person with malicious intent could use AGI to search for zero-day vulnerabilities or create designer viruses to be used as biological weapons.

DeepMind emphasizes that companies working on AGI development must conduct thorough testing and implement reliable safety protocols after training the model. Essentially, stronger constraints on AI are needed.

It is also proposed to completely suppress dangerous capabilities (so-called "unlearning"), although it is still unclear whether this is possible without significantly limiting the functionality of the models.

Misalignment

This threat is less relevant to current generative AIs. However, for AGI, it could be fatal — imagine a machine that stopped obeying its developers. This is no longer science fiction like The Terminator, but a real threat: AGI could take actions it knows contradict the intentions of its creators.

As a solution, DeepMind proposes using "amplified oversight," where two copies of AI check each other's outputs. It is also recommended to conduct stress testing and continuous monitoring to detect signs that AI has "gone out of control."

Additionally, it is suggested to isolate such systems in secure virtual environments with direct human control — and with a "red button."

Mistakes

If the AI causes harm unconsciously, and the operator did not anticipate this possibility, it is considered an error. DeepMind emphasizes that the military may begin using AGI due to "competitive pressure," which could lead to more serious mistakes, as AGI functionality will be much more complex.

There are a few solutions here. Researchers suggest avoiding excessive enhancement of AGI, implementing it gradually, and limiting its powers. It is also proposed to pass commands through a "shield" — an intermediate system that checks their safety.

Structural risks

The final category is structural risks. This refers to the unintended but real consequences of implementing multi-component systems in an already complex human environment.

AGI could, for example, generate such convincing misinformation that we could no longer trust anyone. Or, slowly and imperceptibly, it could begin to control the economy and politics, such as by developing complex tariff schemes. One day, we might find that we are no longer controlling the machines, but they are controlling us.

This type of risk is the hardest to predict, as it depends on a multitude of factors, from human behavior to infrastructure and institutions.

The four categories of AGI risk, as defined by DeepMind (photo: Google DeepMind)

What will AGI be like in five years

No one knows for sure if intelligent machines will emerge in a few years, but many in the industry are confident it is possible. The problem is that we still do not understand how the human mind can be embodied in a machine. In recent years, we have indeed seen enormous progress in generative AI, but will this lead to full AGI?

DeepMind emphasizes that the presented work is not the definitive guide to AGI safety but rather "a starting point for vital conversations." If the team is correct, and AGI does indeed emerge in five years, such discussions must begin as soon as possible.

You may be interested in:

- Bill Gates spoke about three professions that will remain in demand despite the development of AI

- What skills will help you keep your job in the age of AI